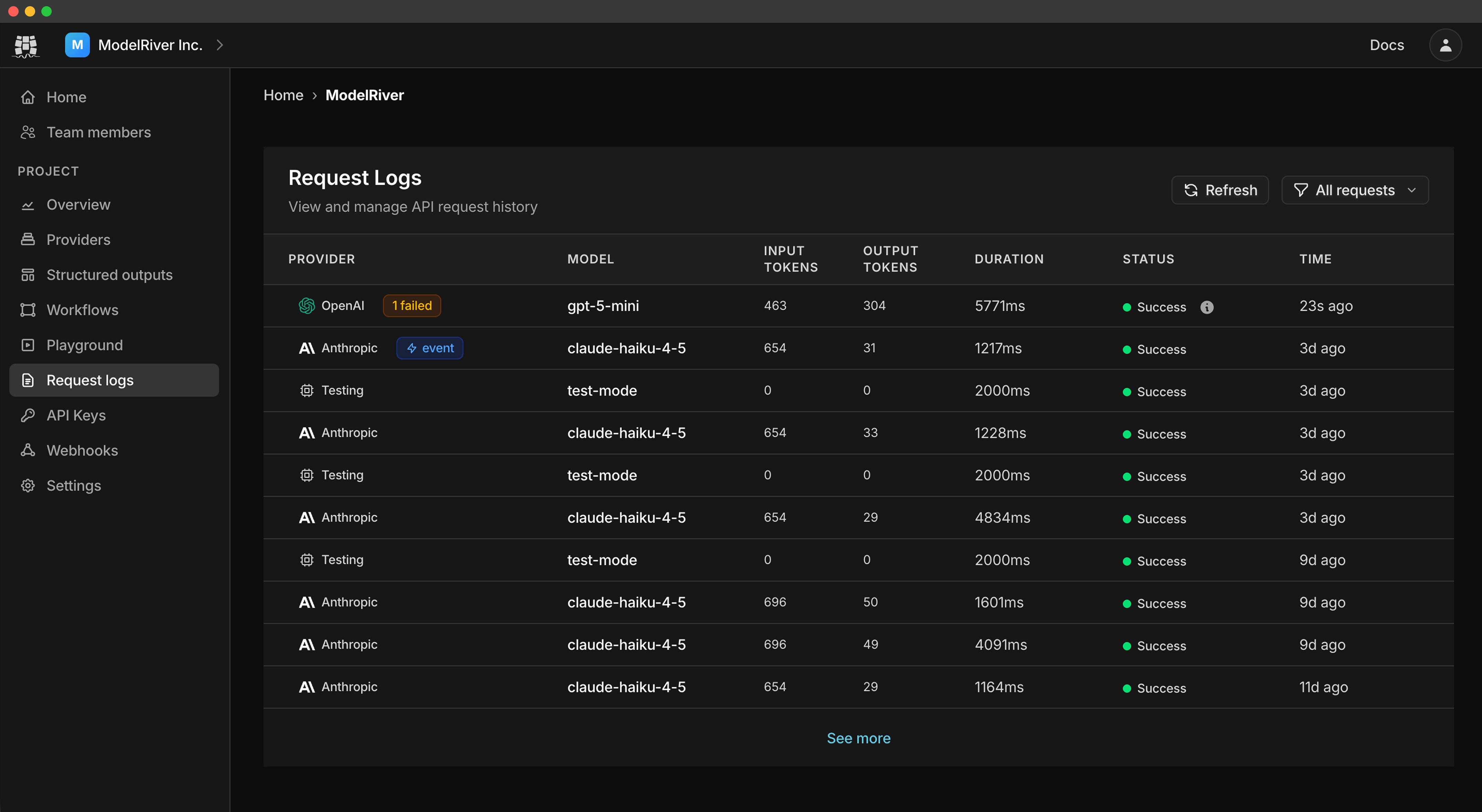

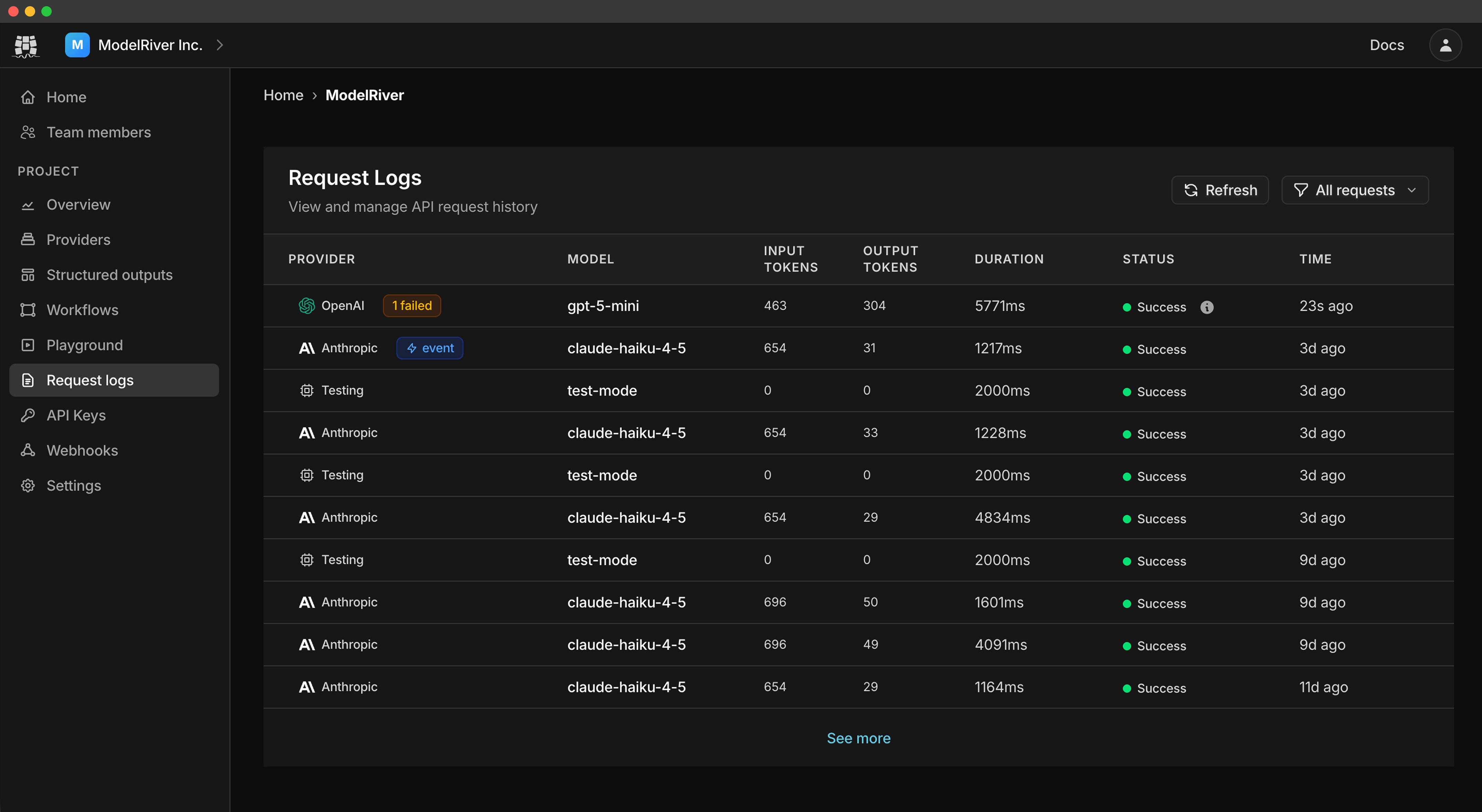

Watch requests flow through

See how ModelRiver handles requests in real-time with intelligent routing and failover

One API for all providers. Built-in failover keeps you online.

Free to start · No credit card · 5 min setup

$ curl https://api.modelriver.com/v1/ai/async \

-H "Authorization: Bearer YOUR_API_KEY" \

-d '{

"workflow": "book_review",

"messages": [{"role": "user", "content": "Hello!"}],

"user_id": "user_12345",

"task_id": "task_67890"

}'See how ModelRiver handles requests in real-time with intelligent routing and failover

Ship faster with built-in reliability and insights.

When one provider fails, requests automatically route to backups. Your app stays online.

Learn moreControl costs and prevent abuse with limits per user, IP, or project.

Learn moreShow responses as they generate for a snappy, real-time experience.

Learn moreGet notified when requests complete. Perfect for background processing.

Learn moreAI generates, you process, then respond. Perfect for tool calling and complex multi-step workflows.

Learn moreGet consistent JSON responses that match your schema. No more parsing surprises.

Learn moreOfficial tools to accelerate your development

One endpoint, one API key. Switch providers without changing your code.

{

"workflow": "my_book_task",

"messages": [

{

"role": "user",

"content": "Hello!"

}

],

"user_id": "user_12345",

"task_id": "task_67890"

}{

"message": "success",

"status": "pending",

"channel_id": "a1b2c3d4-...",

"ws_token": "one-time-websocket-token",

"websocket_url": "wss://api.modelriver.com/socket",

"websocket_channel": "ai_response:PROJECT_ID:a1b2c3d4-..."

}{

"message": "success",

"status": "success",

"data": {

"response": "Hello! How can I help?",

"intent": "greeting",

"confidence": 0.95

},

"model": "gpt-5.2",

"customer_data": {

"user_id": "user_12345",

"task_id": "task_67890"

},

"meta": {

"duration_ms": 1250,

"usage": {

"prompt_tokens": 21,

"completion_tokens": 42,

"total_tokens": 63

}

}

}Try your workflows in a free playground before going live. Validate responses, test failovers, and catch issues early.

Safe testing mode

Run workflows exactly like production without affecting live users.

Free playground

Test unlimited requests in the playground at no extra cost.

Validate outputs

Check that responses match your expected format before going live.

Testing mode

Same settings as production, safe sandbox.

Response preview

See exactly what your app will receive.

{

"data": {

"todo": {

"id": "todo_123",

"title": "Finish project proposal",

"description": "Complete the first draft and review key points",

"completed": false,

"priority": "high",

"due_date": "2025-09-25",

"created_at": "2025-09-23T12:05:00Z",

"updated_at": "2025-09-23T12:05:00Z",

"tags": ["work", "important"]

}

}

}{

"data": {

"todo": {

"type": "object",

"fields": {

"id": { "type": "string", "example": "todo_123" },

"title": { "type": "string", "example": "Finish project proposal" },

"description": {

"type": "string",

"example": "Complete the first draft and review key points"

},

"completed": { "type": "boolean", "example": false },

"priority": {

"type": "string",

"enum": ["low", "medium", "high"],

"example": "high"

},

"due_date": { "type": "string", "format": "date", "example": "2025-09-25" },

"created_at": {

"type": "string",

"format": "date-time",

"example": "2025-09-23T12:05:00Z"

},

"updated_at": {

"type": "string",

"format": "date-time",

"example": "2025-09-23T12:05:00Z"

},

"tags": {

"type": "string[]",

"example": ["work", "important"]

}

}

}

}

}